What AI in Dentistry Can and Cannot Do for Patients in Hamilton

Why patients in Hamilton are hearing more about AI in dentistry now

Artificial intelligence, often called AI, is now a real topic in dental regulation and professional guidance in Ontario. In March 2025, the Royal College of Dental Surgeons of Ontario, or RCDSO, reported that it had developed draft guidance on artificial intelligence in dentistry to help dentists use these tools responsibly and ethically. The RCDSO also noted very strong professional interest in the topic through its education programming.

For patients and families in Hamilton, that matters because it means AI is not just a marketing term. It is being discussed as a practical, ethical, and clinical issue. The important point is that AI in dentistry is being treated as a support tool that must fit within professional standards, not as a replacement for clinical care.

What AI in dentistry usually means in a dental office

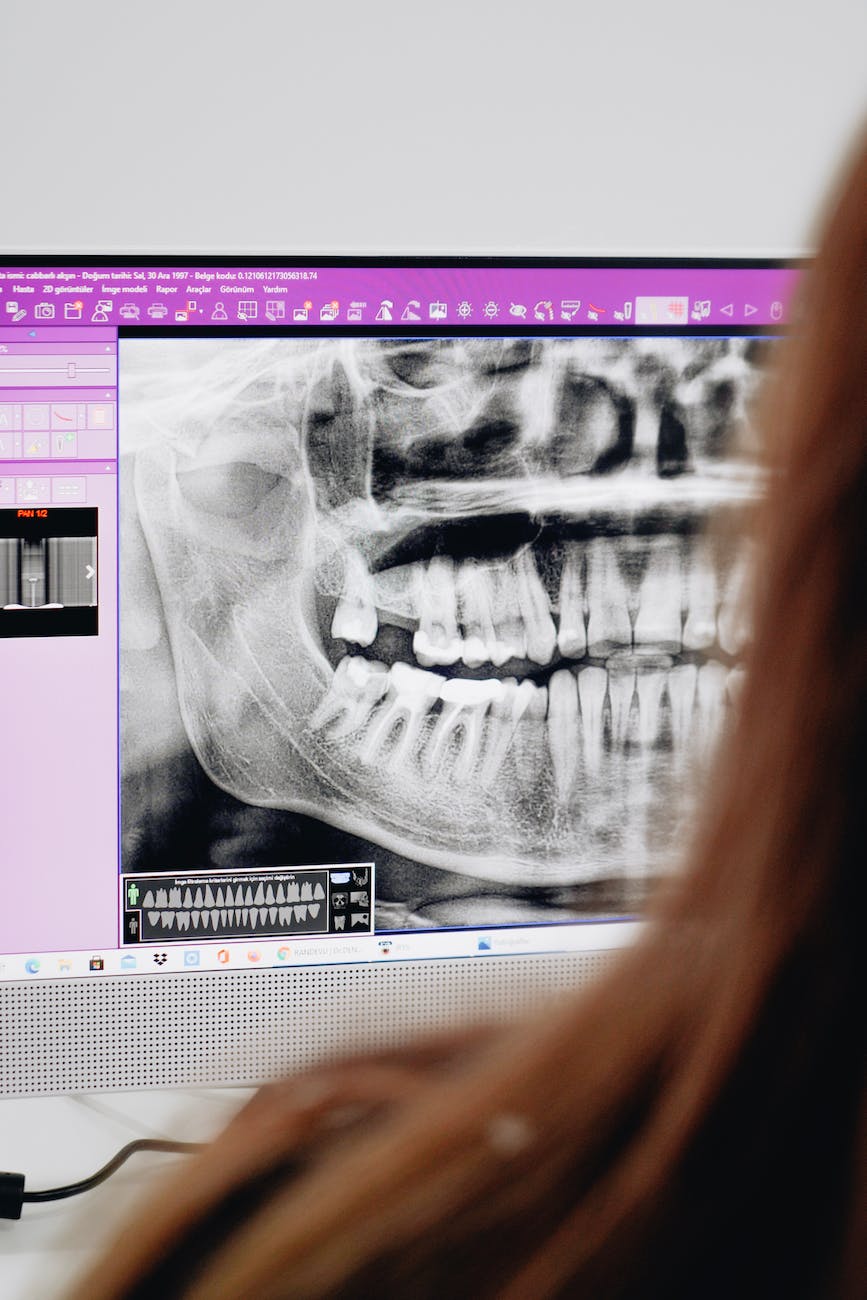

In plain language, AI in dentistry usually means software that helps analyze dental images such as X-rays. It may highlight areas that look suspicious for decay, bone changes, or other findings so the dentist can review them more carefully.

Right now, the strongest use case is image interpretation support. In other words, AI may act like a second set of eyes on a radiograph. It does not know your symptoms, your medical history, how long a problem has been present, whether a tooth responds normally on testing, or what matters most to you when making treatment decisions.

That is why AI support is very different from an independent diagnosis. A diagnosis still depends on the clinical exam, your history, risk factors, the quality and type of image taken, and the dentist’s judgment.

Where AI may help: reviewing radiographs for possible caries or periapical findings

Recent research suggests that AI shows promise in selected imaging tasks, especially when reviewing dental radiographs. A 2025 systematic review and meta-analysis on AI for caries detection found encouraging accuracy overall, but also substantial variation between studies. That variation matters because it means results may not be the same across different image types, software systems, patient populations, and office settings.

A broader 2024 systematic review of AI in dentomaxillofacial imaging found that many studies focused on narrow imaging tasks such as tooth identification, cephalometric landmarks, and lesion detection, including periapical findings. The review concluded that results were promising, but that further studies are still needed before these models can be confidently integrated into everyday practice across real-world scenarios.

For patients, the practical takeaway is simple. AI may help your dentist review an image more consistently or notice an area worth a second look. That is useful, but it is not the same as proving better long-term health outcomes for every patient.

What the newer evidence shows: modest improvement, especially fewer false positives in one 2025 trial

One of the more useful newer studies for patients is a 2025 randomized controlled trial on diagnosing periapical radiolucencies on panoramic radiographs. In that study, AI assistance improved overall diagnostic accuracy from 91.6 percent without AI to 93.3 percent with AI. The main improvement was a reduction in false positives, meaning dentists were less likely to incorrectly label a finding as disease when AI support was available. Sensitivity stayed about the same.

The same trial found that less experienced dentists benefited the most, and AI support was associated with more conservative treatment decisions. That is encouraging because fewer false positives may reduce the chance of overtreatment in some situations.

Still, this was one study on one type of finding, using one imaging context. It should not be treated as proof that AI improves all areas of dental diagnosis or treatment planning.

Why the evidence is promising but still limited

The current evidence base is growing, but it has important limits. Many studies look at how well software performs on image interpretation tasks rather than whether patients do better over time. Studies also differ in the types of radiographs used, the quality of the datasets, the reference standards, and the experience level of the dentists involved.

In the 2025 caries review, statistical heterogeneity was high, which is a technical way of saying the study results varied a lot. When that happens, it becomes harder to apply one summary number to every patient and every practice.

That is why a careful, evidence-based message is more helpful than hype. AI may be useful in certain tasks, especially radiographic review, but real-world clinical benefit is still being clarified.

What AI cannot do on its own: diagnose you as a whole person or choose treatment in context

Good dental care is about more than reading an image. A small shadow on an X-ray can mean different things depending on whether you have pain, swelling, a cracked tooth, past root canal treatment, a history of frequent decay, dry mouth, gum disease, trauma, or other risk factors.

AI cannot examine your mouth, test a tooth, feel a swelling, discuss your goals, or weigh treatment options in the context of your health and preferences. It also cannot replace informed consent. Even if software flags a possible problem, your dentist still needs to explain what was seen, how certain the finding is, what the alternatives are, and whether monitoring, additional testing, or treatment makes sense.

Just as important, AI does not make unnecessary X-rays appropriate. Choosing Wisely Canada recommends that dental X-rays and other imaging be prescribed based on each patient’s medical and dental history, clinical findings, and risk assessment, not on a routine schedule. Technology should support justified care, not expand imaging without a reason.

Questions patients can ask if AI is mentioned during their visit

If your dentist mentions AI, it is reasonable to ask a few practical questions:

- How is the software being used in my case?

- What did the software flag on the X-ray?

- What do you agree with, and what do you disagree with?

- How certain are you that this finding is clinically important?

- Would your recommendation change without the software?

- Do I need this image based on my symptoms and risk factors?

These questions support shared decision-making. They can help patients understand the difference between a software prompt and a clinical diagnosis.

Bottom line: useful support tool, not a substitute for evidence-based dental care

For patients in Hamilton, the most accurate way to think about AI in dentistry today is as a support tool. It may serve as a second set of eyes for some X-rays, and newer research suggests it can modestly improve performance in certain imaging tasks, especially by reducing some false positives. That said, the evidence is still evolving, and the benefits are not uniform across every condition or setting.

The final diagnosis and treatment plan should still come from a dentist who reviews your symptoms, examines you, considers your history and risk factors, and explains your options clearly. Good care still starts with the right exam, the right radiographs when they are actually indicated, and a thoughtful conversation about what is best for you.

Sources

- RCDSO Council Highlights for March 27, 2025

- RCDSO Connect Webinar Series

- Impact of artificial intelligence assistance on diagnosing periapical radiolucencies: A randomized controlled trial

- Accuracy of artificial intelligence in caries detection: a systematic review and meta-analysis

- Applications of artificial intelligence in dentomaxillofacial imaging: a systematic review

- Choosing Wisely Canada: Dentistry Recommendations